Introduction

Google has launched Gemini Nano, a compact and efficient AI model designed specifically for edge devices. Announced in 2025, Gemini Nano brings powerful machine learning capabilities to smartphones, IoT gadgets, and other resource-constrained hardware, enabling real-time AI processing without relying on cloud connectivity.

What Is Gemini Nano?

Gemini Nano is a lightweight variant of Google’s Gemini AI family, optimized for performance and energy efficiency. Key specifications include:

- Model Size: 250 million parameters

- Latency: Sub-50ms inference on mid-range mobile chips

- Power Consumption: Less than 500mW per inference

The model supports tasks such as natural language understanding, image recognition, and sensor data analysis, all on-device.

Advantages for Edge AI

Edge AI refers to running AI workloads locally on devices rather than in centralized data centers. Gemini Nano offers several benefits:

- Privacy: Data stays on-device, reducing exposure of sensitive information.

- Reliability: Operates without network dependency, ensuring consistent performance in offline scenarios.

- Speed: Real-time inference enhances user experience in applications like voice assistants and augmented reality.

- Cost Efficiency: Lowers cloud compute costs and bandwidth usage.

Use Cases

Google and partner developers have demonstrated Gemini Nano in various applications:

- Smartphones: Enhanced voice recognition, real-time translation, and context-aware chat features.

- Wearables: Health monitoring with AI-driven anomaly detection in sensor data.

- Smart Home Devices: Local processing for security cameras and voice-controlled appliances.

- Industrial IoT: Predictive maintenance by analyzing machine sensor data on-site.

Technical Innovations

Gemini Nano achieves its efficiency through:

- Quantization: Reducing model precision to 8-bit without significant accuracy loss.

- Pruning: Removing redundant parameters based on usage patterns.

- Edge-Optimized Architectures: Custom layers that leverage vectorized operations on mobile NPUs.

These techniques ensure Gemini Nano maintains strong performance while fitting the constraints of edge hardware.

Developer Ecosystem

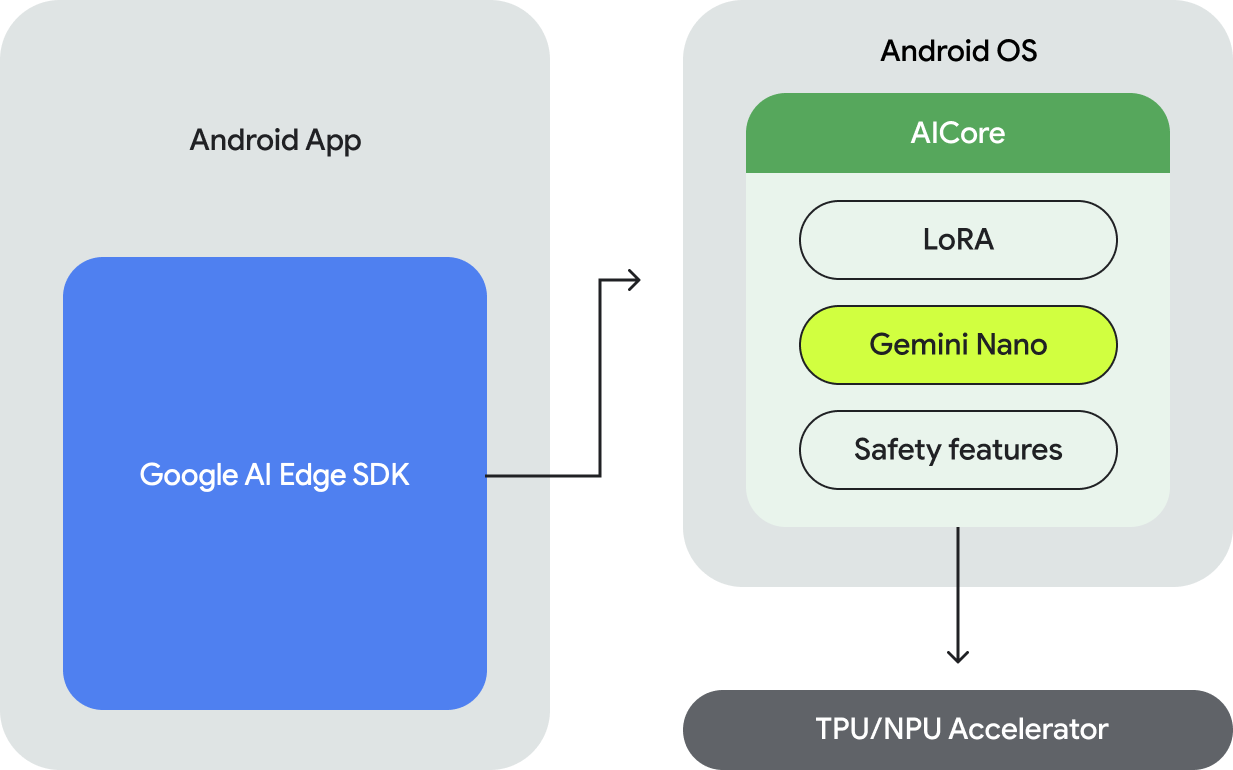

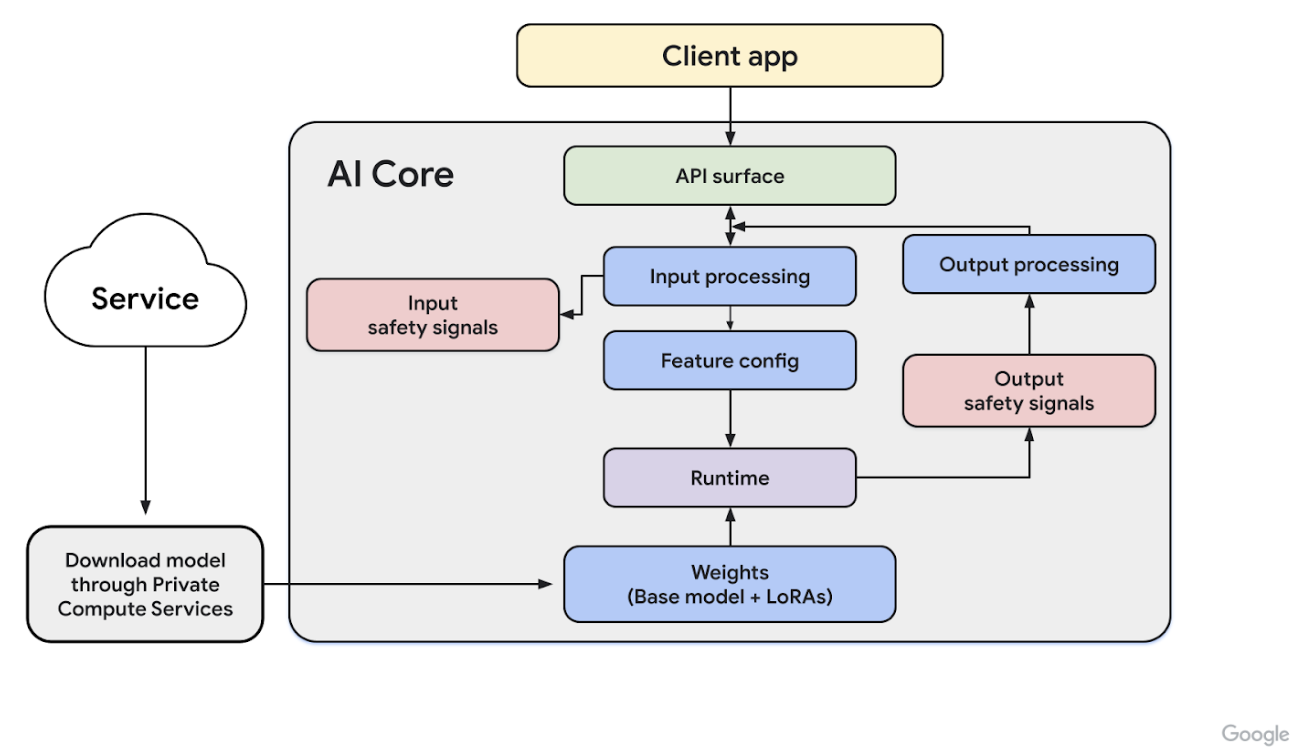

Google is providing support for developers via:

- TensorFlow Lite Integration: Easy deployment on Android and embedded platforms.

- Edge TPU Compatibility: Acceleration on Google’s Coral devices.

- API and SDKs: Tools for model customization and performance profiling.

An active community is already building innovative apps, from AI-powered cameras to autonomous drones.

Conclusion

Gemini Nano represents a significant step in bringing advanced AI to the edge. By balancing performance with efficiency, Google enables a new generation of intelligent devices that operate securely and responsively. As edge computing grows, Gemini Nano is poised to play a key role in democratizing AI across industries.